Introduction

Language models and their application have become an integral part of many corporate strategies. However, as this technology continues to evolve, a more nuanced understanding of its use becomes essential. This article will dissect two concepts that are often lumped together but serve distinct functions: prompt design and prompt engineering. We aim to establish a more comprehensive framework that differentiates between these two critical aspects of working with language models. It should be seen as an opinion piece to start a more elaborate discussion.

Background

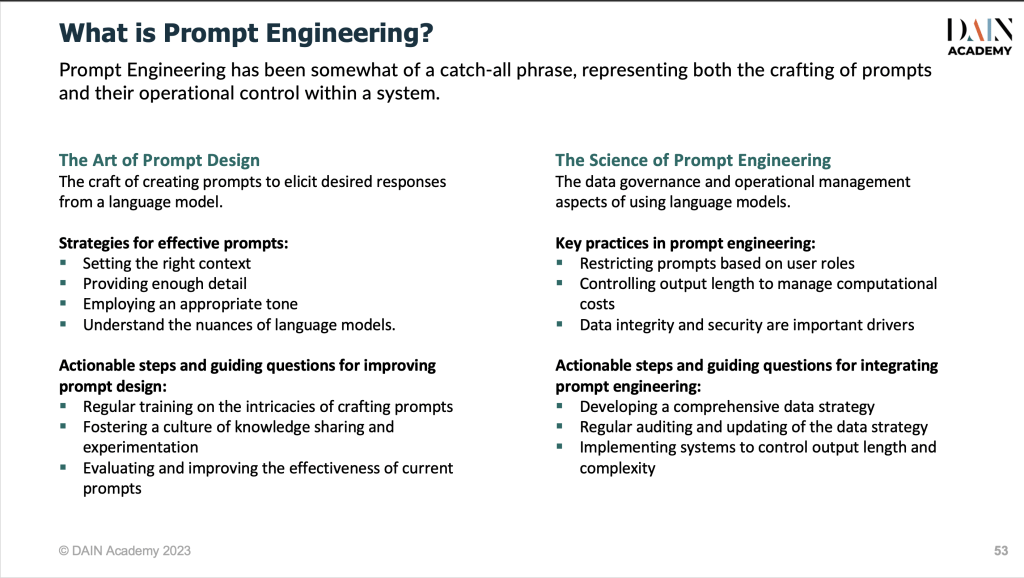

As language models like GPT-4 and beyond become more prevalent, discussions around their application often focus on “prompt engineering”. However, this term has been somewhat of a catch-all phrase, representing both the crafting of prompts and their operational control within a system. While the former aspect has garnered much attention, the latter, which deals with the crucial governance components, remains under-discussed.

Writing a good prompt is somewhat comparable with learning how to use search engines to get the results we want. A crucial skill is to specifically describe the problem you want to solve. However, in our practice of implementing Language Learning Models (LLMs) for organizations, we also often have concrete engineering tasks that come with the installation of LLMs, such as controlling the costs or making sure that only the “right” things, are put into the prompt.

Hence, we argue that this broader operational aspect deserves its own definition, which leads to our introduction of a differentiation of the terms, “prompt engineering” and “prompt design”, specifically for this purpose.

The Art of Prompt Design

Prompt design is the craft of sculpting prompts to elicit desired outputs from a language model. Mastering it requires understanding the nuances of language models as well as how they respond to different prompts. Strategies such as setting the right context, providing enough detail, and employing an appropriate tone can all add up to greatly enhance the quality and relevance of the model’s outputs. To get started with improving prompt design, consider these actionable steps and guiding questions:

- Training: Regularly train your team on the intricacies of crafting prompts. How well does your team understand the workings of language models?

- Knowledge sharing and experimentation: Encourage knowledge sharing and foster a culture of curiosity and experimentation. What platforms or forums exist for your team to share insights and experiences?

- Evaluation and improvement: Evaluate the quality of current prompts and iteratively improve them. How effective are the current prompts in eliciting desired outputs?

The Science of Prompt Engineering

Prompt engineering focuses on the data governance and operational management aspects of using language models. This might include practices such as restricting certain prompts to specific roles within a company in order to maintain data integrity and security, or controlling output length to manage computational costs. To begin integrating prompt engineering in your operations, here are some actionable steps and questions to consider:

- Strategy development: Develop a comprehensive data strategy that outlines the roles, responsibilities, and guidelines for prompt usage. Does your organization have clear policies regarding who can use which prompts?

- Audit and update: Regularly audit and update your data strategy in order to maintain data integrity and security. How often are these policies reviewed and updated?

- Control: Implement systems to control output length to manage computational costs. Do you have the infrastructure in place to control this?

Comparing Prompt Design and Prompt Engineering

Distinguishing between prompt design and prompt engineering can bring more clarity and efficiency to language model applications. While prompt design focuses on crafting high-quality prompts, prompt engineering is centered on controlling and managing their application. Both elements are crucial for an optimized, well-rounded AI strategy for organizations.

Conclusion

Recognizing and separating the domains of prompt design and prompt engineering can enable organizations to develop a comprehensive approach in order effectively integrate AI tools into their operations.

This understanding not only ensures more refined interactions but also allows for controlled, secure, and cost-efficient use of language models.

Key Takeaways

In order to improve prompt design, consider setting up regular training programs that update your team on the nuances of crafting prompts and understanding language model behavior. Encourage knowledge sharing and foster a culture of curiosity and experimentation. On the prompt engineering front, develop a comprehensive data strategy that outlines roles, responsibilities, and guidelines for prompt usage. Regularly audit and update this strategy to maintain data integrity and security. Also, consider setting up systems to control output length to manage computational costs.

What are your thoughts on this? Do you think we need a more elaborate differentiation between prompt engineering and prompt design?